Available options can be found in the Java documentation (the field commandLineParamSynopsis indicates the commandline parameter name for each available method). The above setup builds a network with one hidden layer, having 10 output units using the ReLU activation function, followed by an output layer with the softmax activation function, using a multi-class cross-entropy loss function (MCXENT) as optimization objective.Īnother important option is the neural network configuration -conf in which you can setup hyperparameters for the network. This option can be used multiple times and defines the architecture of the network layer-wise. The most interesting option may be the -layer specification. The desired batch size for batch prediction (default 100). The number of decimal places for the output of numbers in the model (default 2).

If set, classifier capabilities are not checked before classifier is built May output additional info to the console If set, classifier is run in debug mode and The queue size for asynchronous data transfer (default: 0, synchronous transfer). The name of the log file to write loss information to (default = no log file). Dl4jMlpClassifier -hīelow the general options, the specific ones are listed: Options specific to 4jMlpClassifier: ImageInstanceIterator exposes the following options:Īssuming weka.jar is on the CLASSPATH, a first look for the available commandline options of the Dl4jMlpClassifier is shown with $ java weka.Run. The iterator can be selected from the Dl4jMlpClassifier window via the instance iterator option. the ConvolutionLayer's options:Īs explained further in the data section, depending on the dataset a certain InstanceIterator has to be loaded that handles parsing of certain data types (text/image). The user can choose from the following available layers:Ī layer can be further configured, e.g. The layer specification option lets the user specify the sequence of layers that build the neural network architecture: The network configuration option exposes further hyperparameter tuning:

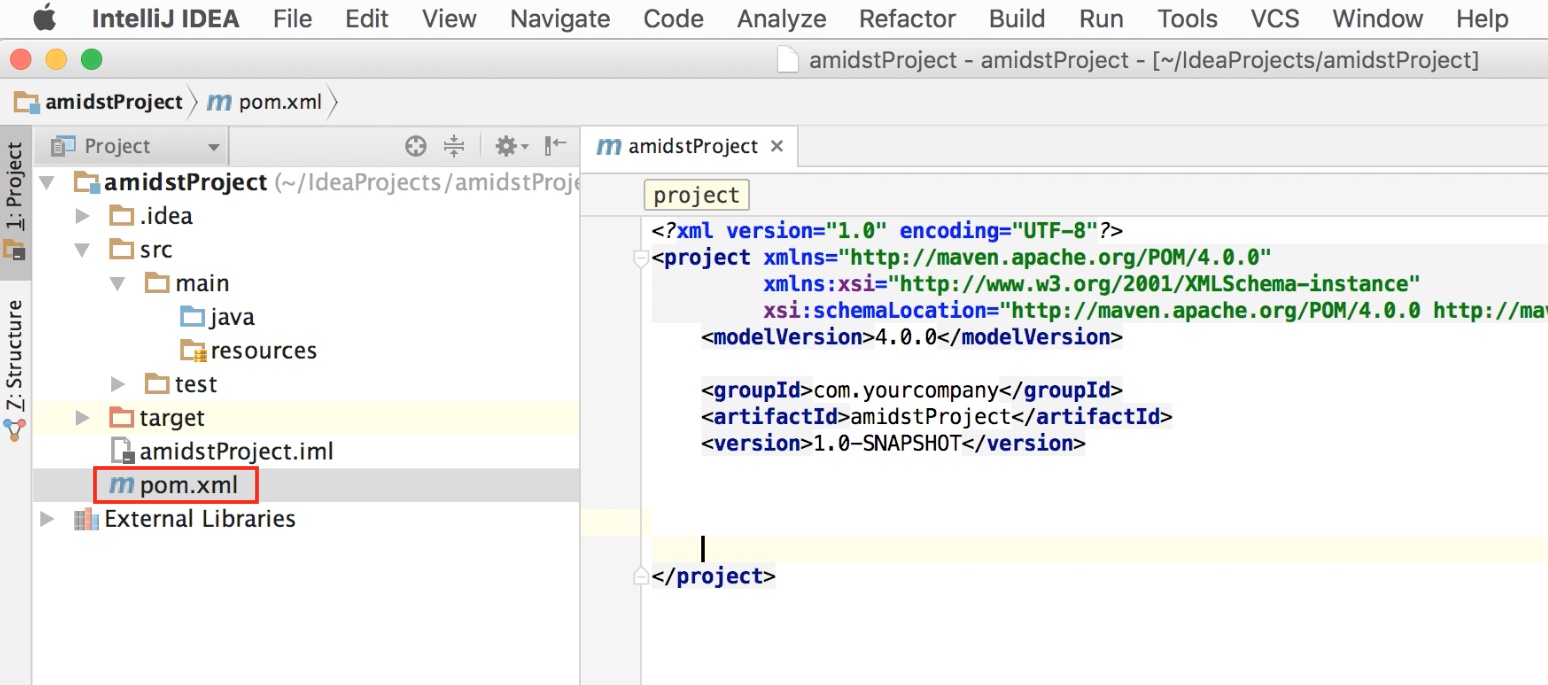

The Dl4jMlpClassifier can be configured as shown below: Make sure your WEKA_HOME environment variable is set. Simple examples are given in the examples section for the Iris dataset and the MNIST dataset. The main classifier exposed by this package is named Dl4jMlpClassifier. It specifies the version of the artifact under given group.If you are new to Weka, a good resource to get started is the Weka manual.Īs most of Weka, the WekaDeeplearning4j's functionality is accessible in three ways:Īll three ways are explained in the following. Examples of artifacts produced by Maven for a project include: JARs, source and binary distributions, and WARs. An artifact is something that is either produced or used by a project. It specifies the id for the artifact (project).

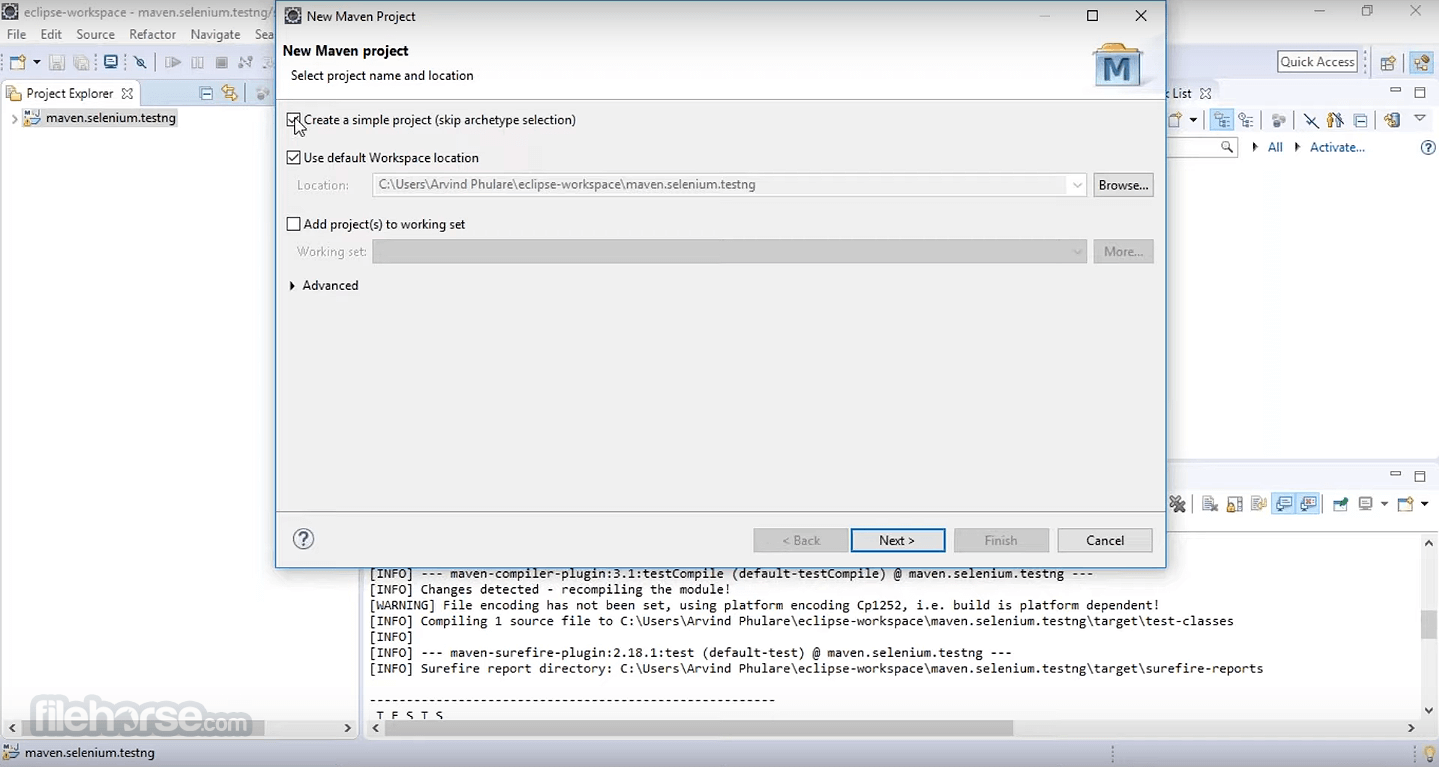

It specifies the id for the project group. But, since maven 2 (also in maven 3), it is renamed as pom.xml.įor creating the simple pom.xml file, you need to have following elements: Element Maven reads the pom.xml file, then executes the goal.īefore maven 2, it was named as project.xml file. The pom.xml file contains information of project and configuration information for the maven to build the project such as dependencies, build directory, source directory, test source directory, plugin, goals etc. POM is an acronym for Project Object Model.